Strategy #6. Keyword Density

Search engines love relevant text. They want to match the keywords that an end user types into a search engine with keywords that are located on your website.

Keyword density analysis is one of the most important ratios of how often these keywords appear on an individual webpage.

What is keyword density? It’s a percentage, calculated this way: Number of times keyword appears on a page / Total word count on page = Keyword Density Keyword density is usually displayed as a percentage.

So, if you have a page that has 100 words on it, and you have a keyword appear 5 times on the page, your page would have a keyword density of 5%. (5 / 100 = 5%)

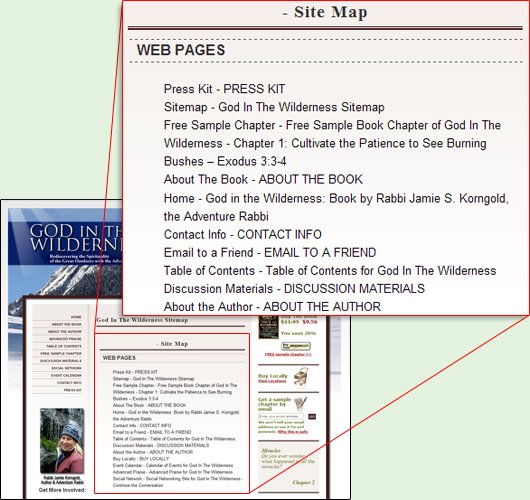

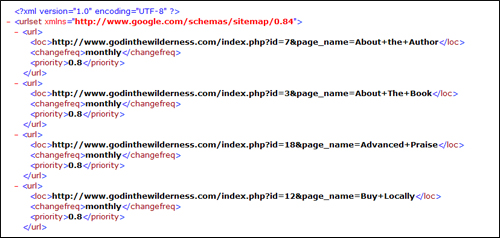

In a real life example, the search term “website design” has an overall keyword density on this page of 4.13%:

Click the graphic above to see a live sample of a keyword page.

(10 instances of the keywords / 242 total words on the site = 4.13%)

However, not all keywords on a page are treated the same. Keywords in the title tags, page name and section headings are often given higher weight than keywords that appear in the regular content area of the page.

Here’s how the keywords break down in the different areas of the site:

|

So, how much keyword density is too much? It depends on which study you read, but it’s generally best to keep your keyword density between 3-6%. Anything more, and you’ll be penalized for trying to spam the search engines.

As a general rule of thumb, if the copy of the site makes sense to a human reading it, you should be fine. But if you repeat the same keyword five times in a row (Website Design, Website Design, Website Design, etc), then you can be banned from search engines or penalized.

Let me know if you’d like us to do a keyword density analysis on your site…

Global Marketing Plus was started to help small businesses compete on the same level as large corporations but for a lot less expense.

Global Marketing Plus was started to help small businesses compete on the same level as large corporations but for a lot less expense.